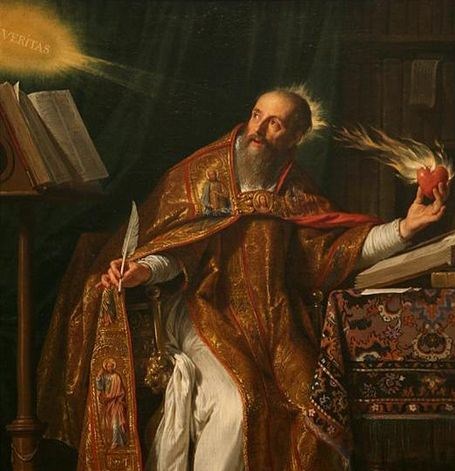

Writing for Gizmodo, Matt Honan describes his experience at the massive Consumer Electronics Show in Las Vegas, and it reads like a passage from Augustine’s Confessions had Augustine been writing in the 21st rather than 5th century.

The quasi-religious overtones begin early on when Honan tells us, “There was ennui upon ennui upon ennui set in this amazing temple to technology.”

Then, a little further on, Honan writes:

“There is a hole in my heart dug deep by advertising and envy and a desire to see a thing that is new and different and beautiful. A place within me that is empty, and that I want to fill it up. The hole makes me think electronics can help. And of course, they can.

They make the world easier and more enjoyable. They boost productivity and provide entertainment and information and sometimes even status. At least for a while. At least until they are obsolete. At least until they are garbage.

Electronics are our talismans that ward off the spiritual vacuum of modernity; gilt in Gorilla Glass and cadmium. And in them we find entertainment in lieu of happiness, and exchanges in lieu of actual connections.

And, oh, I am guilty. I am guilty. I am guilty.

I feel that way too. More than most, probably. I’m forever wanting something new. Something I’ve never seen before, that no one else has. Something that will be both an extension and expression of my person. Something that will take me away from the world I actually live in and let me immerse myself in another. Something that will let me see more details, take better pictures, do more at once, work smarter, run faster, live longer.”

Here is the confession, the thrice-repeated mea culpa, alongside a truly Augustinian account of our disordered attachments and loves complete with a Pascalian nod to the diversionary nature of our engagement with technology.

I call this an Augustinian account not only because of the religiously inflected language and the confessional tone. There is also the explicit frame of an unfulfilling quest to fill a primordial emptiness. Augustine’s Confesssions amounts to a retrospective narrative of the spiritual quest which takes him from dissatisfaction to dissatisfaction until it culminates in his conversion. He famously frames his narrative at the outset when he prays, “You have made us for yourself, and our hearts are restless till they find their rest in you.” The restless heart knows its own emptiness and seeks, often heroically and tragically, to fill it. It loves and seeks to be loved, but it loves all the wrong things.

Pascal, writing in the shadow of Augustine’s influence, put it thus:

“What else does this craving, and this helplessness, proclaim but that there was once in man a true happiness, of which all that now remains is the empty print and trace? This he tries in vain to fill with everything around him, seeking in things that are not there the help he cannot find in those that are, though none can help, since this infinite abyss can be filled only with an infinite and immutable object; in other words by God himself.”

In a post titled “Making Holes In Our Hearts,” Kevin Kelly agrees to a point with Honan’s diagnosis, but his interpretation is quite different and also worth quoting at length. Here is Kelly:

If we are honest, we must admit that one aspect of the technium is to make holes in our heart. One day recently we decided that we cannot live another day unless we have a smart phone, when a dozen years earlier this need would have dumbfounded us. Now we get angry if the network is slow, but before, when we were innocent, we had no thoughts of the network at all. Now we crave the instant connection of friends, whereas before we were content with weekly, or daily, connections. But we keep inventing new things that make new desires, new longings, new wants, new holes that must be filled.

Yes, this is what technology does to us. Some people are furious that our hearts are pierced this way by the things we make. They see this ever-neediness as a debasement, a lowering of human nobility, the source of our continuous discontentment. I agree that it is the source. New technology forces us to be always chasing the new, which is always disappearing under the next new, a salvation always receding from our grasp.

But I celebrate the never-ending discontentment that the technium brings. Most of what we like about being human is invented. We are different from our animal ancestors in that we are not content to merely survive, but have been incredibly busy making up new itches which we have to scratch, digging extra holes that we have to fill, creating new desires we’ve never had before.

Kelly is on to something here. Discontentment is generative. Dissatisfaction can be productive. When Cain, having murdered his brother, is cursed to be forever a wanderer alienated from God and family, he builds a city in response. Here is an allegory to match Kelly’s observation. The perpetually wandering, alienated heart builds and makes and creates.

But does it follow that we should then celebrate discontentment, dissatisfaction, and unhappiness? I don’t see how. It is hard to cheer on misery, and it is a certain misery that we are talking about here. Perhaps the more appropriate response is the kind of plaintive admiration we reserve for the tragic hero. They may posses a real nobility, but it is finally consumed in despair and destruction.

The narrator of Cain’s story tells us that he built his city in the land called Nod, a name that echoes the Hebrew word for “wandering.” This touch of literary artistry poignantly suggests that even surrounded by the accouterments of civilization the human soul wanders lost and alienated – never satisfied, never home, never secure.

There is at least one other reason why we need not celebrate generative misery. Misery is not the only fount of human creativity. Love, wonderment, compassion, kindness, curiosity, beauty — all of these might also set us to work and marvelously so.

Augustine understood that finding rest for his restless heart in the love of God did not necessarily extinguish all other loves. It merely reordered them. Having found the kind of satisfaction and happiness that our stuff (for lack of a more inclusive word) can never bring does not mean that we can never again create or enjoy the fruits of human creativity. In fact, it likely means that we may create and enjoy more fully because such creation and enjoyment will not be burden with the unbearable weight of filling the primordial vacuum of the human heart.

The simplest and only way to enjoy penultimate and impermanent things is to resist the temptation to invest them with the significance and adoration that only ultimate and permanent things can sustain.