It is almost impossible to read about the ethics of autonomous vehicles without encountering some version of the trolley car problem. You’re familiar with the general outline of the problem, I’m sure. An out-of-control trolley car is barreling toward five unsuspecting people on a track. You are able to pull a lever and redirect the trolley toward another track, but there is one person on this track who will be hit by the trolley as a result. What do you do? Nothing and let five people die, or pull the lever and save five at the expense of one person’s life?

The thought experiment has its origins in a paper by the philosopher Phillipa Foot on abortion and the concept of double effect. I’m not sure when it was first invoked in the context of autonomous vehicles, but I first came across trolly car-style hypothesizing about the ethics of self-driving cars in a 2012 essay by Gary Marcus, which I learned about in a post on Nick Carr’s blog. The comments on that blog post, by the way, are worth reading. In response, I wrote my own piece reflecting on what I took to be more subtle issues arising from automated ethical systems.

More recently, following the death of a pedestrian who was struck by one of Uber’s self-driving vehicles in Arizona, Evan Selinger and Brett Frischmann co-authored a piece at Motherboard using the trolley car problem as a way of thinking about the moral and legal issues at stake. It’s worth your consideration. As Selinger and Frischmann point out, the trolley car problem tends to highlight drastic and deadly outcomes, but there are a host of non-lethal actions of moral consequence that an autonomous vehicle may be programmed to take. It’s important that serious thought be given to such matters now before technological momentum sets in.

“So, don’t be fooled when engineers hide behind technical efficiency and proclaim to be free from moral decisions,” the authors conclude. “’I’m just an engineer‘ isn’t an acceptable response to ethical questions. When engineered systems allocate life, death and everything in between, the stakes are inevitably moral.”

In a piece at the Atlantic, however, Ian Bogost recommends that we ditch the trolley car problem as a way of thinking about the ethics of autonomous vehicles. It is, in his view, too blunt an instrument for serious thinking about the ethical ramifications of autonomous vehicles. Bogost believes “that much greater moral sophistication is required to address and respond to autonomous vehicles.” The trolley car problem blinds us to the contextual complexity of morally consequential incidents that will inevitably arise as more and more autonomous vehicles populate our roads.

I wouldn’t go so far as to say that trolley car-style thought experiments are useless, but, with Bogost, I am inclined to believe that they threaten to eclipse the full range of possible ethical and moral considerations in play when we talk about autonomous vehicles.

For starters, the trolley car problem, as Bogost suggests, loads the deck in favor of a utilitarian mode of ethical reflection. I’d go further and say that it stacks the deck in favor of action-oriented approaches to moral reflection, whether rule-based or consequentialist. Of course, it is not altogether surprising that when thinking about moral decision making that must be programmed or engineered, one is tempted by ethical systems that may appear to reduce ethics to a set of rules to be followed or calculations to be executed.

In trolley car scenarios involving autonomous vehicles, it seems to me that two things are true: a choice must be made and there is no right choice.

There is no right answer to the trolley car problem. It is a tragedy either way. The trolley car problem is best thought of as a question to think with not a question to answer definitively. The point is not to find the one morally correct way to act but to come to feel the burden of moral responsibility.

Moreover, when faced with trolley car-like situations in real life, rare as they may be, human beings do not ordinarily have the luxury to reason their way to a morally acceptable answer. They react. It may be impossible to conclusively articulate the sources of that reaction. If there is an ethical theory that can account for it, it would be virtue ethics not varieties of deontology or consequentialism.

If there is no right answer, then, what are we left with?

Responsibility. Living with the consequences of our actions. Justice. The burdens of guilt. Forgiveness. Redemption.

Such things are obviously beyond the pale of programmable ethics. The machine, with which our moral lives are entwined, is oblivious to such subjective states. It cannot be meaningfully held to account. But this is precisely the point. The really important consideration is not what the machine will do, but what the human being will or will not experience and what human capacities will be sustained or eroded.

In short, the trolley car problem leads us astray in at least two related ways. First, it blinds us to the true nature of the equivalent human situation: we react, we do not reason. Second, building on this initial misconstrual, we then fail to see that what we are really outsourcing to the autonomous vehicle is not moral reasoning but moral responsibility.

Katherine Hayles has noted that distributed cognition (distributed, that is, among human and non-humans) implies distributed agency. I would add that distributed agency implies distributed moral responsibility. But it seems to me that moral responsibility is the sort of thing that does not survive such distribution. (At the very least, it requires new categories of moral, legal, and political thought.) And this, as I see it, is the real moral significance of autonomous vehicles: they are but one instance of a larger trend toward a material infrastructure that undermines the plausibility of moral responsibility.

Distributed moral responsibility is just another way of saying deferred or evaded moral responsibility.

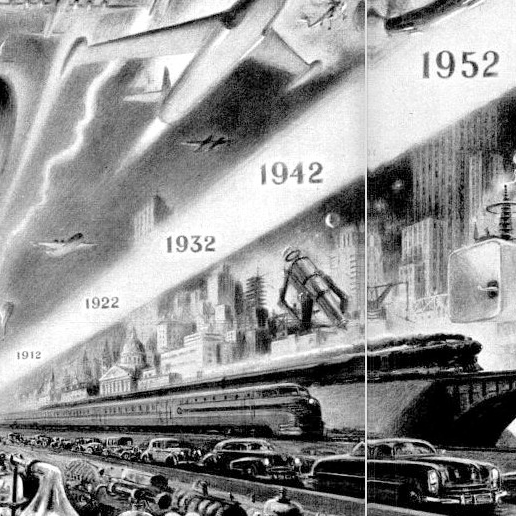

The trajectory is longstanding. Here is Jacques Ellul commenting on the challenge modern society poses to the possibility of responsibility.

Let’s consider this from another angle. The trolley car problem focuses our ethical reflection on the accident. As I’ve suggested before, what if we were to ask not “What could go wrong?” but “What if it all goes right?” My point in inverting this query is to remind us that technologies that function exactly as they should and fade seamlessly into the background of our lived experience are at least as morally consequential as those that cause dramatic accidents.

Well-functioning technologies we come to trust become part of the material infrastructure of our experience, which plays an important role in our moral formation. This material infrastructure, the stuff of life with which we as embodied creatures constantly interact, both consciously and unconsciously, is partially determinative of our habitus, the set of habits, inclinations, judgments, and dispositions we bring to bear on the world. This includes, for example, our capacity to perceive the moral valence of our experiences or our capacity to subjectively experience the burden of moral responsibility. In other words, it is not so much a matter of specific decisions, although these are important, but of underlying capacities, orientations, and dispositions.

I suppose the question I’m driving at is this: What is the implicit moral pedagogy of tools to which we outsource acts of moral judgment?

While it might be useful to consider the trolley car, it’s important as well that we leave it behind for the sake of exploring the fullest possible range of challenges posed by emerging technologies with which our moral lives are increasingly entangled.

Tip the Writer

$1.00

But what happens when the human and machine get blended? https://theconversation.com/its-not-my-fault-my-brain-implant-made-me-do-it-91040